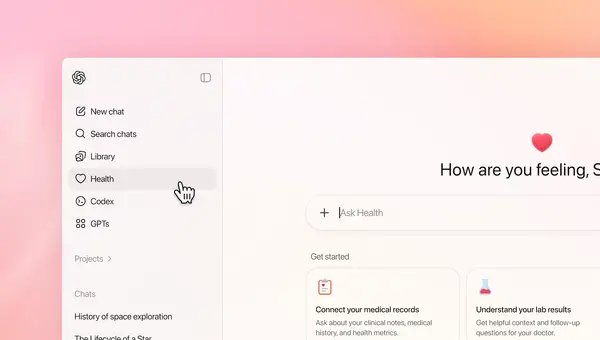

OpenAI has officially introduced ChatGPT Health, a dedicated health-focused experience inside ChatGPT that aims to help users better understand their personal health information. The move reflects a growing trend where people rely on digital tools to track wellness, review test results, and prepare for medical visits. At the same time, it highlights how carefully artificial intelligence must be handled when it intersects with healthcare.

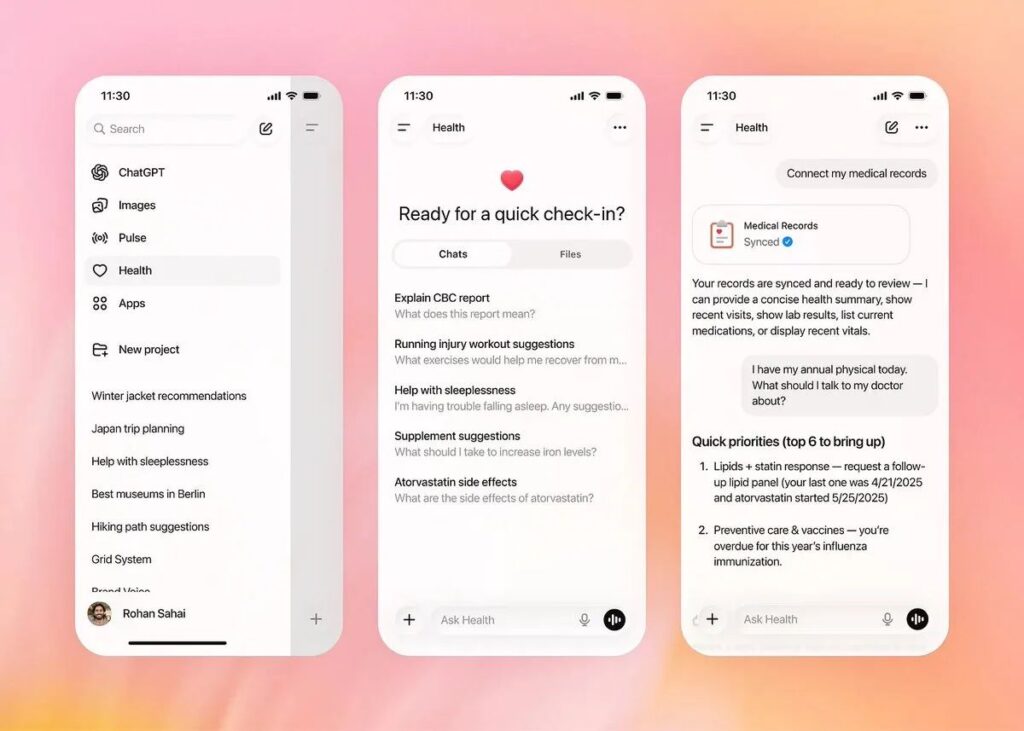

Unlike general chatbot interactions, ChatGPT Health is built to work with real user health data, including medical records and wellness information from supported apps. The idea is simple but ambitious. Instead of answering health questions in a generic way, the AI can respond with awareness of the user’s own history, lab values, and daily habits. This allows explanations to feel more relevant and easier to understand, especially for people who struggle to interpret medical language.

Despite this personalization, OpenAI is clear about one thing. ChatGPT Health is not a doctor, does not diagnose illnesses, and does not recommend treatments or medications. It is positioned as a support layer that helps people make sense of information, not as a replacement for professional medical care.

How ChatGPT Health Uses AI

ChatGPT Health is fully AI-driven, using OpenAI’s latest language models to analyze and explain health information in plain language. Users can ask questions such as what a lab result generally means, how lifestyle habits might affect long-term wellness, or what topics they should discuss during a doctor’s appointment.

The system focuses on explanation rather than instruction. For example, it may help a user understand why cholesterol levels matter or how sleep patterns can influence energy levels. When a question goes beyond safe boundaries, the AI is designed to respond cautiously and encourage professional consultation.

This approach reflects lessons learned from earlier uses of AI in sensitive fields. OpenAI has invested heavily in training the system to avoid overconfidence, speculative answers, or misleading medical claims. The model has been shaped with input from hundreds of physicians across multiple specialties to ensure responses remain responsible and realistic.

Privacy and Safety at the Core

Health data is among the most sensitive personal information, and OpenAI has built ChatGPT Health with extra layers of protection. Health conversations are separated from normal chats and stored in a more restricted environment. This reduces the risk of accidental data exposure and helps users feel more confident about sharing personal details.

OpenAI also states that health-related conversations are not used to train its AI models. This is an important distinction, as it means personal medical information does not become part of broader learning datasets. Users also maintain control over what data is connected and can disconnect services whenever they choose.

While ChatGPT Health is not classified as a regulated medical system, OpenAI works with established health data partners that follow recognized security standards. This hybrid approach allows innovation while still respecting the seriousness of medical information.

Restrictions in Healthcare AI

Artificial intelligence in healthcare has always faced stricter scrutiny than other sectors, and ChatGPT Health is no exception. Medical decisions can have serious consequences, and even small inaccuracies can cause harm. For this reason, OpenAI has placed clear limits on what ChatGPT Health can and cannot do.

The AI avoids giving direct medical advice, prescribing actions, or suggesting diagnoses. Instead, it focuses on education, context, and preparation. If a user asks something that could lead to unsafe conclusions, the system is trained to slow down the response, add disclaimers, and guide the user toward qualified professionals.

These restrictions are not temporary. They reflect a broader industry understanding that AI should support healthcare systems, not bypass them. Regulators, hospitals, and technology companies continue to debate how far AI should go in medicine, and OpenAI’s cautious rollout suggests it is choosing long-term trust over rapid expansion.

Gradual Rollout and Access

ChatGPT Health is not available to everyone immediately. OpenAI is releasing the feature in stages, starting with a limited group of users. Some integrations, such as linking certain health and fitness apps, are currently restricted by region.

This slow rollout allows OpenAI to monitor real-world usage, identify potential issues, and refine safety systems before wider adoption. It also reflects the reality that healthcare technology requires more testing than typical consumer software.

What This Means

ChatGPT Health represents a meaningful step toward personalized digital health assistance, but it also shows restraint. Instead of promising AI-powered diagnosis or automated care, OpenAI is focusing on understanding, clarity, and user empowerment.

As more people manage their health digitally, tools like ChatGPT Health could reduce confusion, improve communication with doctors, and encourage informed decision-making. However, the responsibility still rests with humans, both patients and professionals.

In a time when AI is advancing rapidly, ChatGPT Health stands out not for how much it claims to do, but for how carefully it defines what it should not do. That balance may ultimately determine whether AI earns a trusted place in healthcare.